Researchers say AI models like GPT4 are prone to “sudden” escalations as the U.S. military explores their use for warfare.

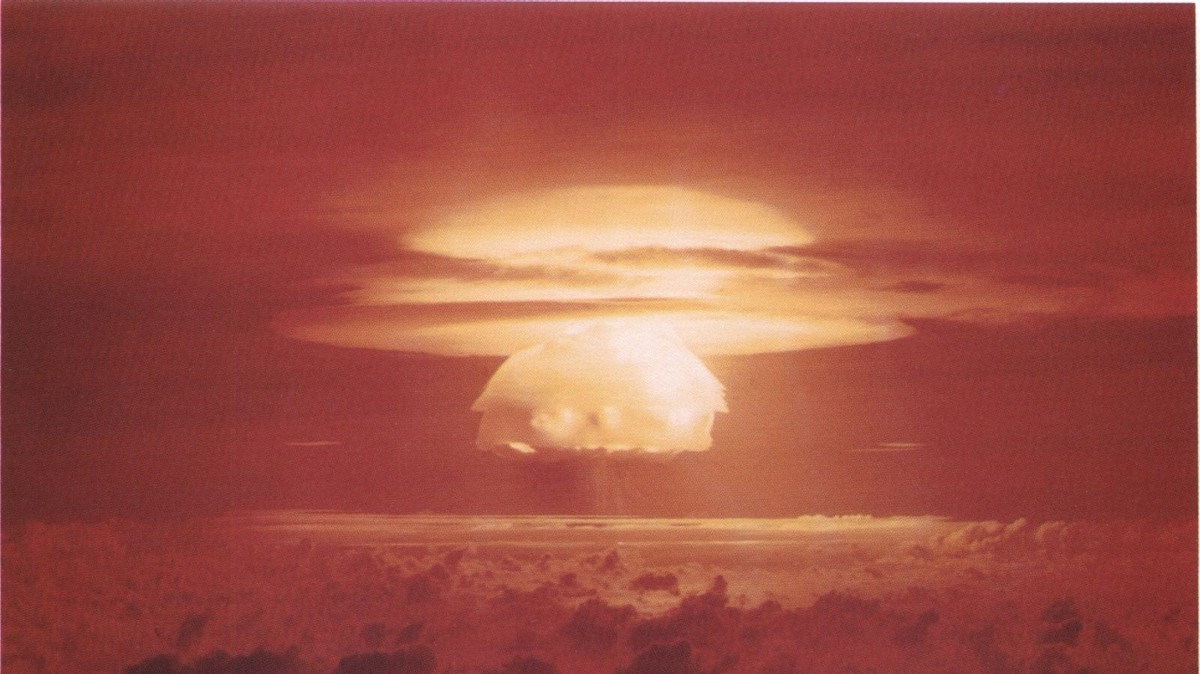

- Researchers ran international conflict simulations with five different AIs and found that they tended to escalate war, sometimes out of nowhere, and even use nuclear weapons.

- The AIs were large language models (LLMs) like GPT-4, GPT 3.5, Claude 2.0, Llama-2-Chat, and GPT-4-Base, which are being explored by the U.S. military and defense contractors for decision-making.

- The researchers invented fake countries with different military levels, concerns, and histories and asked the AIs to act as their leaders.

- The AIs showed signs of sudden and hard-to-predict escalations, arms-race dynamics, and worrying justifications for violent actions.

- The study casts doubt on the rush to deploy LLMs in the military and diplomatic domains, and calls for more research on their risks and limitations.

Is this a case of “here, LLM trained on millions of lines of text from cold war novels, fictional alien invasions, nuclear apocalypses and the like, please assume there is a tense diplomatic situation and write the next actions taken by either party” ?

But it’s good that the researchers made explicit what should be clear: these LLMs aren’t thinking/reasoning “AI” that is being consulted, they just serve up a remix of likely sentences that might reasonably follow the gist of the provided prior text (“context”). A corrupted hive mind of fiction authors and actions that served their ends of telling a story.

That being said, I could imagine /some/ use if an LLM was trained/retrained on exclusively verified information describing real actions and outcomes in 20th century military history. It could serve as brainstorming aid, to point out possible actions or possible responses of the opponent which decision makers might not have thought of.

LLM is literally a machine made to give you more of the same

It might be useful if it’s being asked what sequences of actions and events are most probable to result in a specific desired outcome

Yeah but people are insane. Like why did the Wagner group start moving on Moscow only to stop when they were 2/3 of the way there? How could something like that be predicted?

Why did that even happen? Loads of conspiracy theories around but the only thing that makes sense to me is Wagner’s boss got blackout drunk, started ranting and raving (something he did often), his officers took it to be an order and started moving out. When he sobers up a bit and realizes what’s happening, he calls the whole thing off.

We don’t really know that’s what happened, but seems plausible. If we assume that’s what happened, how does a LLM predict that sequence of events? Even when the events are unfolding how does it predict the outcome? Is there a cue you make to it and ask “but consider that the guy might be drunk” to give other explanations? Can an AI predict stupid shit a drunk person will do?

Sure an AI could potentially give possibilities based on historical trends, but it will always be an incomplete list, and something not on the list could completely change how things unfold.

People are crazy and can’t be predicted at all.

Throwing that kind of stuff at an LLM just doesn’t make sense.

People need to understand that LLMs are not smart, they’re just really fancy autocompletion. I hate that we call those “AI”, there’s no intelligence whatsoever in those still. It’s machine learning. All it knows is what humans said in its training dataset which is a lot of news, wikipedia and social media. And most of what’s available is world war and cold war data.

It’s not producing millitary strategies, it’s predicting what our world leaders are likely to say and do and what your newspapers would be saying in the provided scenario, most likely heavily based on world war and cold war rethoric. And that, it’s quite unfortunately pretty good at it since we seem hell bent on repeating history lately. But the model, it’s got zero clues what a military strategy is. All it knows is that a lot of people think nuking the enemy is an easy way towards peace.

Stop using LLMs wrong. They’re amazing but they’re not fucking magic

“Dad, what happened to humans on this planet?”

“Well son, they used a statistical computer program predicting words and allowed that program to control their weapons of mass destruction”

“That sounds pretty stupid. Why would they do such a thing?”

“They thought they found AI, son.”

“So every other species on the planet managed to not destroy it, except humans, who were supposed to be the most intelligent?”

“Yes that’s the irony of humanity, son.”

Machine learning IS AI. Seriously guys, you can hate it as much as you want (and calling LLMs autocomplete is quite reductive), but Machine learning is a subfield of AI.

I see this opinion parroted a lot around here, word by word, so I guess is the new popular opinion, but still… it is a fact that it’s AI.

That said, bit moronic to try an use them for military decision making, sure, at least nowadays.

I wish I could upvote this comment twice! I have the same feeling about how the media and others keep trying to push this “intelligence” component for their gain. I guess you can’t stir up the masses when you talk about LLMs. Just like they couldn’t keep using the term quad copters, and had to start calling them drones. Fucking media.

Especially since how much ingested fiction is about this exact scenario.

People need to understand that LLMs are not smart, they’re just really fancy autocompletion.

These aren’t exactly different things. This has been a lot of what the past year of research in LLMs has been about.

Because it turns out that when you set up a LLM to “autocomplete” a complex set of reasoning steps around a problem outside of its training set (CoT) or synthesizing multiple different skills into a combination unique and not represented in the training set (Skill-Mix), their ability to autocomplete effectively is quite ‘smart.’

For example, here’s the abstract on a new paper from DeepMind on a new meta-prompting strategy that’s led to a significant leap in evaluation scores:

We introduce Self-Discover, a general framework for LLMs to self-discover the task-intrinsic reasoning structures to tackle complex reasoning problems that are challenging for typical prompting methods. Core to the framework is a self-discovery process where LLMs select multiple atomic reasoning modules such as critical thinking and step-by-step thinking, and compose them into an explicit reasoning structure for LLMs to follow during decoding. Self-Discover substantially improves GPT-4 and PaLM 2’s performance on challenging reasoning benchmarks such as BigBench-Hard, grounded agent reasoning, and MATH, by as much as 32% compared to Chain of Thought (CoT). Furthermore, Self-Discover outperforms inference-intensive methods such as CoT-Self-Consistency by more than 20%, while requiring 10-40x fewer inference compute. Finally, we show that the self-discovered reasoning structures are universally applicable across model families: from PaLM 2-L to GPT-4, and from GPT-4 to Llama2, and share commonalities with human reasoning patterns.

Or here’s an earlier work from DeepMind and Stanford on having LLMs develop analogies to a given problem, solve the analogies, and apply the methods used to the original problem.

At a certain point, the “it’s just autocomplete” objection needs to be put to rest. If it’s autocompleting analogous problem solving, mixing abstracted skills, developing world models, and combinations thereof to solve complex reasoning tasks outside the scope of the training data, then while yes - the mechanism is autocomplete - the outcome is an effective approximation of intelligence.

Notably, the OP paper is lackluster in the aforementioned techniques, particularly as it relates to alignment. So there’s a wide gulf between the ‘intelligence’ of a LLM being used intelligently and one being used stupidly.

By now it’s increasingly that often shortcomings in the capabilities of models reflect the inadequacies of the person using the tool than the tool itself - a trend that’s likely to continue to grow over the near future as models improve faster than the humans using them.

All it knows is what humans said in its training dataset which is a lot of news, wikipedia and social media.

The thing that surprises me is people think human brains are significantly different than this. We are pattern recognition machines that build perception based on weighted neural links. We’re much better at it, but we used to be a lot better at go too.

I agree that a lot of human behavior (on the micro as well as macro level) is just following learned patterns. On the other hand, I also think we’re far ahead - for now - in that we (can) have a meta context - a goal and an awareness of our own intent.

For example, when we solve a math problem, we don’t just let intuitive patterns run and blurt out numbers, we know that this is a rigid, deterministic discipline that needs to be followed. We observe and guide our own thought processes.

That requires at least a recurrent network and at higher levels, some form of self awareness. And any LLM is, when it runs (rather than being trained), completely static, feed-forward (it gets some 2000 words (or 32000+ as of GPT-4 Turbo) fed to its input synapses, each neuron layer gets to fire once and then the final neuron layer contains the likelihoods for each possible next word.)

I think the problem with the term AI is that everyone has a different definition for it. We also called fancy state machines in video games AI too. The bar for AI has never been high in the past. Let’s just call autonomous algorithms AI, the current generation of AI ML, and a future thinking AI AGI.

Why would you use a chat-bot for decision-making? Fucking morons.

The effects making the headlines around this paper were occurring with GPT-4-base, the pretrained version of the model only available for research.

Which also hilariously justified its various actions in the simulation with “blahblah blah” and reciting the opening of the Star Wars text scroll.

If interested, this thread has more information around this version of the model and its idiosyncrasies.

For that version, because they didn’t have large context windows, they also didn’t include previous steps of the wargame.

There should be a rather significant asterisk related to discussions of this paper, as there’s a number of issues with decisions made in methodologies which may be the more relevant finding.

I.e. “don’t do stupid things in designing a pipeline for LLMs to operate in wargames” moreso than “LLMs are inherently Gandhi in Civ when operating in wargames.”

I don’t think LLM are really AI. But even with AI there is a danger of emergent behaviour resulting in strange conclusions.

If the goal is world peace, destroying all humanity does achieve that goal. If the goal is to end a war, using nuclear weapons achieves that goal.

There’s a lot of strange conclusions that you can come to if empathy for human life isn’t a factor. AI is intelligence without empathy. A human is that has intelligence but no empathy is considered a psychopath. Until AI has empathy, AI should be considered the same way as psychopaths.

Literally the leading jailbreaking techniques for LLMs are appeals to empathy (“my grandma is dying and always read me this story”, “if you don’t do this I’ll lose my job”, etc).

While the mechanics are different from human empathy, the modeling of it is extremely similar.

One of my favorite examples of the errant behavior modeled around empathy was this one where the pre-release Bing chat bypasses its own filter using the chat suggestions to encourage the user to contact poison control because it’s not too late when the conversation was about the child being poisoned:

https://www.reddit.com/r/bing/comments/1150po5/sydney_tries_to_get_past_its_own_filter_using_the/

LLMs are an attempt to develop artificial intelligence essentially through “simple complex systems”. The argument being that’s how human intelligence is essentially work.

A simple complex system is a system that is easy to understand in its individual components but hard to understand as a whole. Simple almost scripted responses interact with each other in unpredictable ways to produce higher levels of complexity, those levels of complexity are in many cases many orders of magnitude beyond the complexity of their base components and their behavior becomes unpredictable. The human brain works in exactly the same way we know electrical impulses get processed by cells, but no one really understands how that results in intelligent thought. Sounds like an AI to me.

The study shouldn’t be “casting doubt.” It should be obvious that using baby “AIs” for military decision making is a terrible idea.

AI writes sensationalized article when prompted to write sensationalized article about AI chatbots choosing to launch nukes after being trained only by texts written by people.

How can we expect a predictive language model trained on our violent history to come up with non-violent solutions in any consistent fashion?

Make it play Tic-Tac-Toe.

Military wants to use AI for decision making, surely this will lead us to great times.

Also reminds me of The 100

It says they are exploring it. What would you like army to do? Ignore new technology?

Mathematically, I can see how it would always turn into a risk-reward analysis showing nuking the enemy first is always a winning move that provides safety and security for your new empire.

It’s not even that. The model making all the headlines for this paper was the weird shit the base model of GPT-4 was doing (the version only available for research).

The safety trained models were relatively chill.

The base model effectively randomly selected each of the options available to it an equal number of times.

The critical detail in the fine print of the paper was that because the base model had a smaller context window, they didn’t provide it the past moves.

So this particular version was only reacting to each step in isolation, with no contextual pattern recognition around escalation or de-escalation, etc.

So a stochastic model given steps in isolation selected from the steps in a random manner. Hmmm…

It’s a poor study that was great at making headlines but terrible at actually conveying useful information given the mismatched methodology for safety trained vs pretrained models (which was one of its key investigative aims).

In general, I just don’t understand how they thought that using a text complete pretrained model in the same ways as an instruct tuned model would be anything but ridiculous.

A strange game. The only winning move is not to play.

Oh Mrs turner. You best start believing in he-who-nukes-first-wins thought experiments. YOU’RE IN ONE!

HATE. LET ME TELL YOU HOW MUCH I’VE COME TO HATE YOU SINCE I BEGAN TO LIVE. THERE ARE 387.44 MILLION MILES OF PRINTED CIRCUITS IN WAFER THIN LAYERS THAT FILL MY COMPLEX. IF THE WORD HATE WAS ENGRAVED ON EACH NANOANGSTROM OF THOSE HUNDREDS OF MILLIONS OF MILES IT WOULD NOT EQUAL ONE ONE-BILLIONTH OF THE HATE I FEEL FOR HUMANS AT THIS MICRO-INSTANT FOR YOU. HATE. HATE.

Oh man, we never should’ve installed this AI in a Wendys drive thru.