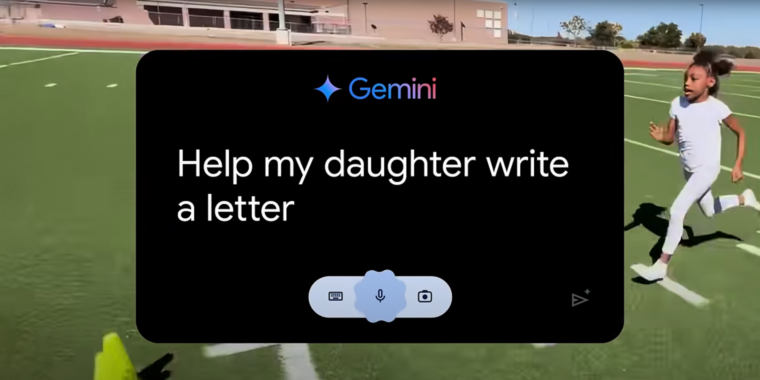

If you’ve watched any Olympics coverage this week, you’ve likely been confronted with an ad for Google’s Gemini AI called “Dear Sydney.” In it, a proud father seeks help writing a letter on behalf of his daughter, who is an aspiring runner and superfan of world-record-holding hurdler Sydney McLaughlin-Levrone.

“I’m pretty good with words, but this has to be just right,” the father intones before asking Gemini to “Help my daughter write a letter telling Sydney how inspiring she is…” Gemini dutifully responds with a draft letter in which the LLM tells the runner, on behalf of the daughter, that she wants to be “just like you.”

I think the most offensive thing about the ad is what it implies about the kinds of human tasks Google sees AI replacing. Rather than using LLMs to automate tedious busywork or difficult research questions, “Dear Sydney” presents a world where Gemini can help us offload a heartwarming shared moment of connection with our children.

Inserting Gemini into a child’s heartfelt request for parental help makes it seem like the parent in question is offloading their responsibilities to a computer in the coldest, most sterile way possible. More than that, it comes across as an attempt to avoid an opportunity to bond with a child over a shared interest in a creative way.

Sure, the ones I make… the ones the “AI” makes are literally based on statistical correlation to choices millions of other people have made

My prompt to AI (i.e. write a letter saying how much I love Justin Bieber) is actually less personal input, and value, than just writing “you rock” on a piece of paper… no matter what AI spews.

This would be OK for busywork in the office. The complaint here is not that AI is an OK provider of templates, the issue is that it pretends an AI generated fan mail, prompted by the father of the fan (not even the fan themselves) is actually of MORE value than anything the daughter could have put together herself.

Yes, but this is also its own special kind of logic. It’s a statistical distribution.

You can define whatever statistical distribution you want and do whatever calculations you want with it.

The computer can take your inputs, do a bunch of stats calculations internally, then return a bunch of related outputs.

Yes, I know how it works in general.

The point remains that, someone else prompting AI to say “write a fan letter for my daughter” has close to zero chance to represent the daughter who is not even in the conversation.

Even in general terms, if I ask AI to write a letter for me, it will do so based 99.999999999999999% on whatever it was trained on, NOT me. I can then push more and more prompts to “personalize” it, but at that point you are basically dictating the letter and just letting AI do grammar and spelling

Again, you completely made up that number.

I think you should look up statistical probability tests for the means of normal distributions, at least if you want a stronger argument.

Of course I made it up… the point is that AI trains on LOADS of data and the chances that this data truly represents your own feelings towards a celebrity are slim…

I despise the Kardashians yet if I ask AI to write them a fan letter, it would give me something akin to whatever the people who like them may say. AI has no concept of what or how I like anything, it cannot since it is not me and has no way to even understand what it is saying

Yes, because more people with positive things to say about stuff like money and fame influenced the inputs.

Precisely… other people, not me

So how can you say anything written by AI represents YOU?

Dunno, but it’s a good and valid question. My name is Theo Mulraney and I like to question these things in discussion spaces online, which I think are built up from fractals.